Just about a year ago I questioned the opinion of many analysts and bloggers suggesting that Siri was losing the personal digital assistant battle to Google Assistant and Alexa. I reasoned that Apple didn’t care about that ‘battle,’ but instead, it was improving Siri enough so that they could sell more of their devices. If only Apple made the right investments on Siri, i.e., strengthen its apparently weak, some may say funny, ability to understand natural language, fundamentally improve its Natural Language Processing. It turns out Apple didn’t read my post ☺. Siri seems worse in some respect. For all the R&D investments that Apple did in 2018, I continue to be flummoxed by the answers of Siri while I’m driving to get estimated time of arrivals and Siri asking me to look at the screen. ☹ You know I’m driving, why are you asking me to look at the screen? In the meantime, Apple launched the HomePod in February of 2018, and two features stood out: the sound quality and Siri. I decided before the holidays to give it a try and indeed what a sound, wow! Not as good as my smallish Polk stereo speakers, but way better than most smart speakers and as good as or better than the more expensive Google’s Home Max. In regards to the virtual assistant, i.e., Siri, functionality, that’s another story. In this blog I have been commenting on the battle among the personal digital assistants, specifically, Alexa, Google Assistant, Siri, and Samsung Viv. Up until recently, Alexa only spoke English, but its polyglot abilities have improved. It now speaks German and Japanese too. It is catching up with Google Assistant but still far behind Siri. Amazon Echo and Alexa had a strong international marketing push for the holidays, in over 80 countries. An unofficial number of devices sold in 2017 is 22 million, albeit the majority of them being the low-end Dot device. How do you think buyers in non-English speaking countries, and not in Germany or Japan, are using the speaker if they cannot speak to it? In its aggressive push to earn the trust of 3rd parties, Amazon in 2017 has pursued hardware manufactures to embed Alexa in their devices. Most notably BMW have announced a new skill for Alexa so you can use Alexa at home and in the car, if you own a recent BMW. That was last year. At this week CES show in Las Vegas, Amazon was in full force. Several hardware manufacturers announced Alexa-enabled speakers, alarm clocks, projectors, cameras, and appliances. Corbin Davenport reported the list here. And earlier this year, Amazon opened up Alexa’s voice recognition and NLP services by launching Amazon Lex, to allow 3rd parties build chatbots based on the same technology used by Alexa. The conversational aspects of chatbots built on the Lex platform, and their ability to retain context, only seem to surface in the context of which ‘slot’ a chatbot require. For example, a hotel booking chatbot must have an arrival date, checkout date, category, city, and price range. The conversation between the user and hotel booking chatbot built on Lex will only occur in the context of these slots. In their quest for fast market dominance, Amazon should not discount the reservations on user experience. While app reviews are not conclusive, they are indicative of user sentiment. In comparing the 2017 reviews, Google Assistant App reviews are 4.2 and 4.1 out of 5 stars, for Android and iOS, respectively. Alexa App reviews are 3.5 on Android and a mere 2.6 rating for the iOS version. We should expect Amazon to redirect some of their investments into making Alexa smarter and improve the user experience in 2018. Quote borrowed from Oprah Winfrey (@Oprah). I thought it to be very appropriate for the time of year and for the state of affairs in our Government, and the shake-up of Business!

On a lighter note, for the new year, a very interesting article in the WSJ today, Review section, on how to make or break your new year resolutions of doing better at work, exercise more, etc., with supporting big data analyses and studies. Few excerpts:

Happy New Year! Agile, Waterfall, or Hybrid?

If you happen to browse job ads and positions nowadays, you will come to realize that the most if not all companies use Agile development methodology. I have always wondered why make it such a prominent factor in the job requirements. It’s just a methodology and it's not like learning a new language. I’m not disputing the benefits of using Agile development but wondering if companies have become slave to a methodology. Agile development lets you build and see products coming to life gradually, instead of working heads down for months fearful that you may not ever see the finished product. It’s also easier with Agile development to take detours, make corrections, ask for feedback, adjust your aim along the way. But some companies still use the Waterfall methodology, and there are good reasons why Waterfall may still make sense today. Most common scenario: a program manager needs to provide and commit to a delivery date for a new product because the CFO, Marketing, or customers, need it to budget expenses, plan the launch, or fund the deployment. For a product or a new major release, how would the PM go by estimating a delivery date of something that is going to take 9, 12 months or longer, by executing the project in 2-week sprints and milestones? If they did commit, they would be just providing an informed guess with no plans to support it, and with a high risk of delivering something different than they had committed to. The Waterfall methodology has been used for years for this reason and forced the program managers to think through at the outset about product design, architecture, frameworks, resources, critical paths, and so forth. These are issues that one cannot think through one sprint at a time and then hope that it will all come together. But there is Waterfall and, what I call, Hybrid Waterfall. The Hybrid Waterfall is what I have used in these exact situations when confronted with a new product, or a major new release that replaced an existing architecture. In a series of posts analyzing what is happening in the digital assistant platform race, this post discusses Samsung digital assistants.

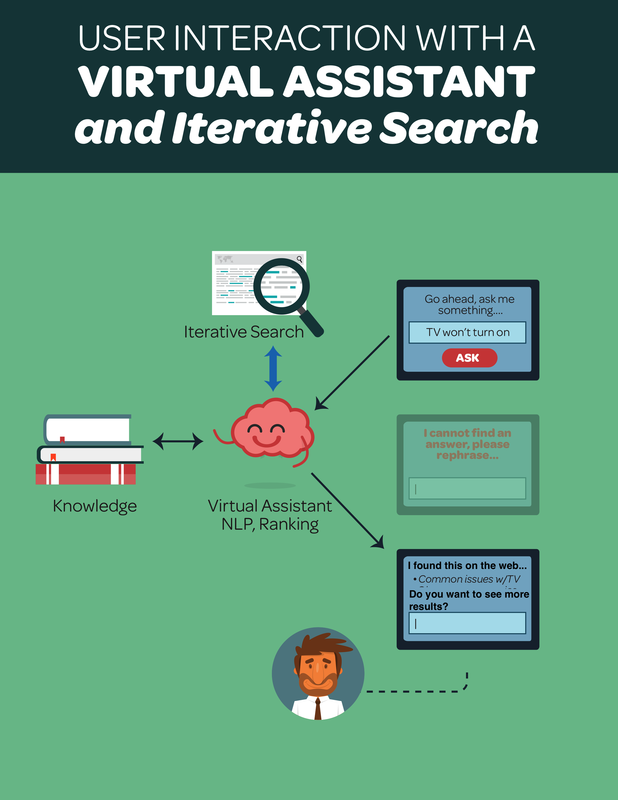

A personal digital assistant race would not be complete without a mention of Samsung, the other giant in the consumer home and personal mobile devices. Samsung had Bixby, its own virtual digital assistant platform when they acquired Viv Labs in October of last year. Viv Labs was founded by the Siri founders who had left Apple to pursue their dream for a universal digital assistant platform and open to developers to create plug-ins for the platform. They had been working on the new digital assistant for four years when Samsung acquired them. Samsung must not have had much confidence in Bixby and appeared to have bought on the idea that Viv would found its way into all Samsung devices. So, it was a surprise when Samsung announced the Galaxy S8 earlier this year and the digital assistant shipped with the device was based on their original Bixby. Public statements still indicate that Samsung has big plans for Viv and they may be just taking the time to bring it to market at the right time, in most if not all Samsung devices. A few years ago, I proposed an Iterative Search architecture that when used in conjunction with a Virtual Assistant would help feed the conversational dialogs when the Virtual Assistance was at a loss for an answer. See white paper. In a different post on this blog, I commented on how the newest releases of Solr and ElasticSearch can supplement a Virtual Assistant in these scenarios. In fact, Search has now been adopted by Virtual Assistants for that same reason.

Digital Assistant, like Siri and Google Assistant, usually provide a specific answer to a question, e.g., “What is the oldest city in the world,” it will give you the answer, or ask to do something for you, e.g., “Turn the lights on in the living room.” The digital assistant, when confident of the answer, reads back aloud over the home speaker or phone the answer or confirms the action taken. What they do, if they have no knowledge about the question or do not understand the intent? They do a web search to find the best possible matches to the query. Siri and Google Assistant have improved this feature recently. You may have noticed that if they do not have a specific answer to your question they show a list of web links containing the information you're looking for. Both have a filtering algorithm that ranks them and shows only the top three or four. Siri used to use Bing for these search suggestions; it recently switched to Google Search. As expected, Google Assistant uses Google Search as well. Why are Siri and Google Assistant not iterating further the search step to find among the search suggestions the most likely answer to the question and read it back aloud? In a series of posts analyzing what is happening in the digital assistant platform race, this post discusses Apple Siri.

Apple pioneered the personal digital assistant space when they launched Siri in 2011 on their iPhone 4S. It received kudos and funny jokes from all parts of the industry, but overall it was a success. Steve Jobs lured the founders of Siri into Apple in 2010, but to everyone's surprise, the original Siri founders, and rest of the team, started leaving Apple soon after the launch of the iPhone 4S. It is fair to assume, based on the recent announcements of Viv Labs, that the Siri team realized that their objective to make Siri a universal digital assistant for everyone was not Apple’s objective. Apple interest in Siri was another Jobs' genial moment that saw better devices and better user experiences because of Siri. Siri helped Apple sell more products by improving products' user experience and help customers become more productive. The departure of the Siri team must have created some internal challenges at Apple and possibly the reason for the slowdown and the subsequent slow reaction to Alexa. Even after this turbulence though many still see Siri as a smarter digital assistant than Alexa. Considering that iPhones are still selling like hotcakes, Mac has reached a remarkable 7% market share, and Watch is slowly displacing FitBit as the preferred activity tracker, I would say that Siri is not driving customers away for sure. Apple management is not losing sleep over Siri's challenges, but they recognize it needs to keep pace for the simple reason of not allowing competitors an opportunity to encroach on their customer base. In a series of posts analyzing what is happening in the digital assistant platform race, this post discusses Google Assistant.

Google Assistant technology debuted initially in Google’s messaging app Allo, and Google Home speaker in 2016. Google Home was a direct response to Amazon. They may be perceived as the latest entrant in the digital assistant's race, but Google Assistant core technology has been in the works at Google for many years. Google has been working on NLP for decades, investing on NLP since their beginning and this was in a way a small step for them and to be able to compete with Amazon. Google success in Search and Adwords is due primarily to their early understanding and investments in Natural Language Processing. Google so far has the best commercial conversational platform because of this history. In a series of posts analyzing what is happening in the digital assistant platform race, this post discusses Amazon Alexa and the devices it is sold with: Amazon Echo and Echo Dot.

Amazon Alexa has taken the breath away from Siri. Amazon, like Apple, understood that speech was a medium that could make people more productive and get things done faster. Amazon main strategy at the start was to drive customers to their online store. It was a brilliant positioning when they launched the Echo as a speaker that could execute voice commands. They opened the platform to developers only a few months after the launch of the Echo, and after almost two years there are more than 10,000 new skills developed by 3rd parties (as of February 2017). Apple proved with the App Store that you could make a platform incredibly successfully if the developers come and build apps, useful and beautiful apps. Amazon is following the same script with a vengeance and speed that is remarkable. In a white paper a few years back, I opined that adoption of Virtual Digital Assistants platforms was being slowed down by the fact that building and maintaining its ‘knowledge’ was still a predominantly manual task and therefore expensive and slow. Over the last 20 years, many companies have adopted Virtual Assistants on their website, and some with a reasonable success, that showed consistent ROIs and improved customer satisfaction. However, Virtual Assistants never became pervasive during last decade. Many early adopters were excited about the possibilities, envisioned new uses for them, not just for customer support but also as hostess, marketing/product advisors. But one of the issues that muted this excitement was the inefficiency of the knowledge management process. These solutions relied on rule-based systems that required manual intervention to retrain the virtual assistants. While these systems did use machine learning for certain features, e.g., to adjust keyword weights or remember frequently used responses to queries, but the creation and modification of the dialogs was pretty much a manual effort. If in doubt, they accepted the alternative of just placing a static FAQ or search engine that indexed the knowledge automatically, even if the user experience was not nearly as good. This attitude has changed over the last few years, and companies are now recognizing the importance of the conversational user experience to retain customers. For the virtual assistant platforms still around, this could be a renewed opportunity for a breakthrough. The rule-based Virtual Assistants are better for businesses than the modern ML-based models, for two critical reasons: 1) amount and quality of data available to build reliable ML-based systems, 2) simplicity and predictability of rule-based systems. In a white paper a few years back, I proposed the Iterative Search architecture that used in conjunction with a Virtual Assistant would help feed the conversational dialogs when the Virtual Assistance was at a loss for an answer. (link to article)

It advocated three steps:

What are Solr and ElasticSearch? In 2010 Solr merged with the Lucene project, and ElasticSearch first release came out. In the years that followed, Solr became the preferred open source distributed search, mostly for unstructured text, while ElasticSearch team continued their parallel development. In recent years, ElasticSearch has surpassed Solr in new distributed search deployments for its ease of use and integration, and grouping and filtering capabilities. Both are active open source projects. Elastic is the company behind ElasticSearch, not to be confused with Amazon ElasticSearch Service. Why Solr / ElasticSearch? If you want to provide your Virtual Assistant platform customers the option to enable the Iterative Search step to the data flow, before returning an answer to the user, Solr or Elastic Search are two equally valid open source choices to implement the Iterative Search. In a prior post, I commented on how personal digital assistants and virtual assistants in general work and how they become smart in answering questions. While virtual assistants for businesses work just the same, when choosing a platform, businesses have other aspects to consider.

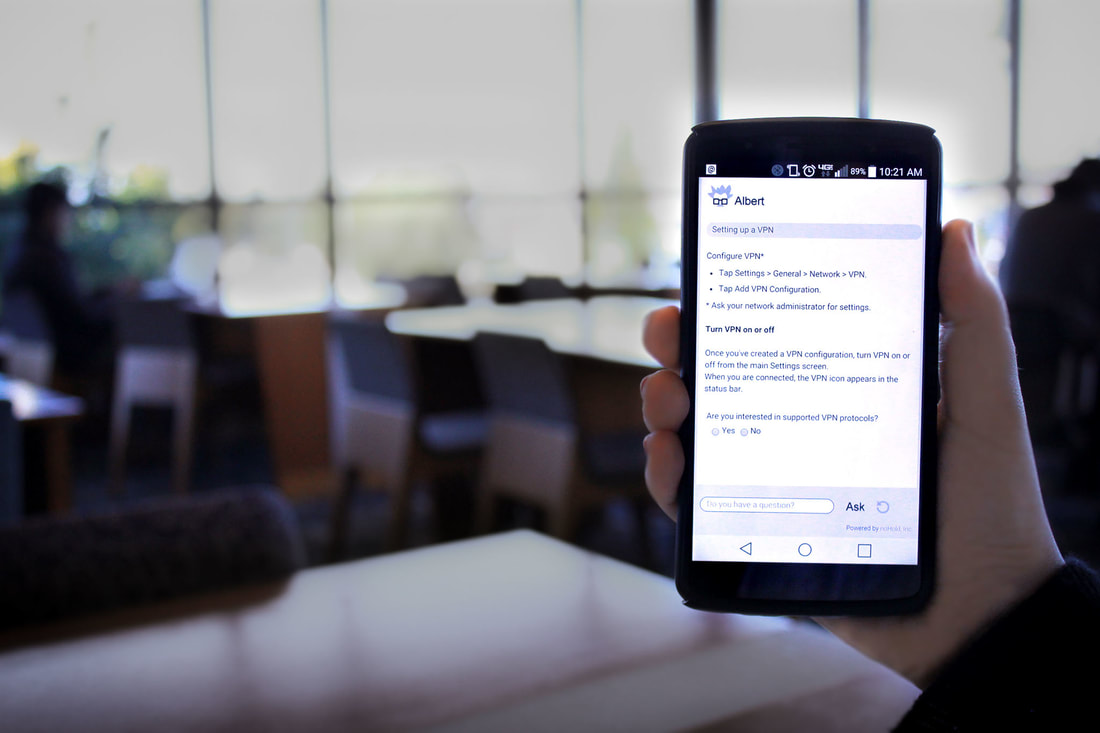

Typically, a business will rely on a virtual assistant platform that allows them to run the same algorithms over a different set of knowledge. Think of a technology company with 100 products and wants to provide a virtual assistant service for each product. Building and coding virtual assistants 100 times is an expensive and time-consuming process. This is where companies such as NoHold come into play with their SaaS platform. Each virtual assistant uses precisely the same NLP, navigation and inference algorithms on every product’s knowledge base. The only difference is the separation of dialog trees, structured hierarchical trees, and meta-data, with the answers available across any virtual assistant or 3rd party app. The rule-based approach, and in particular the NoHold platform, is in my opinion simpler and less costly for creating virtual assistants for businesses. It provides these benefits:

The technology world and bloggers are abuzz about the digital assistant platforms. A recurrent comment you read about is why Apple, which introduced the digital assistant to the masses, has fallen behind with Siri.

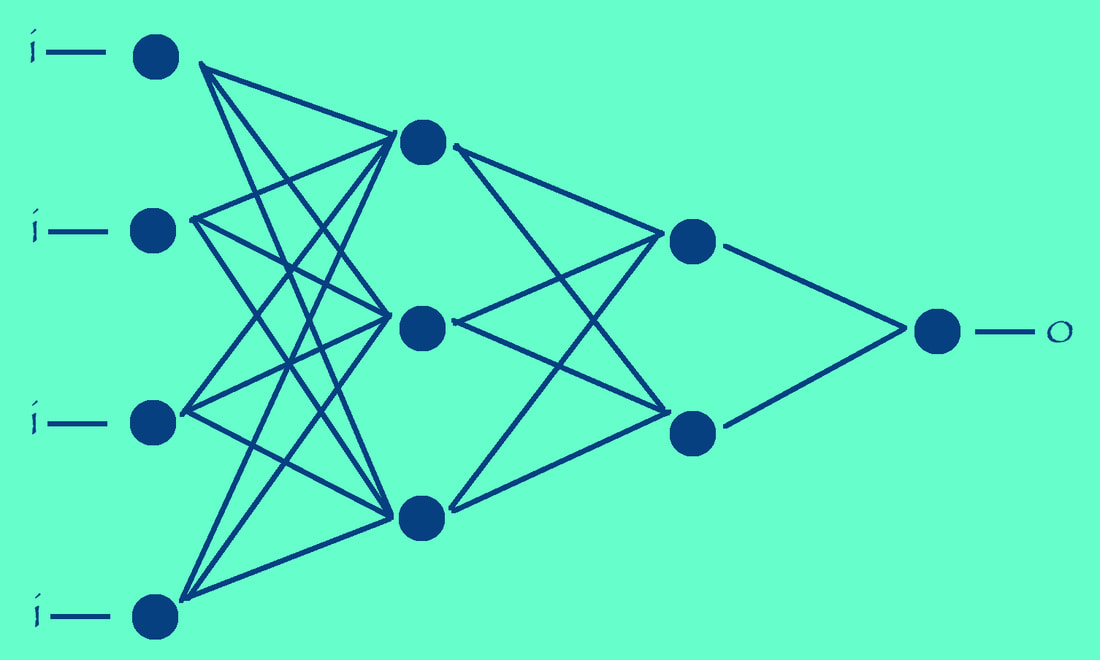

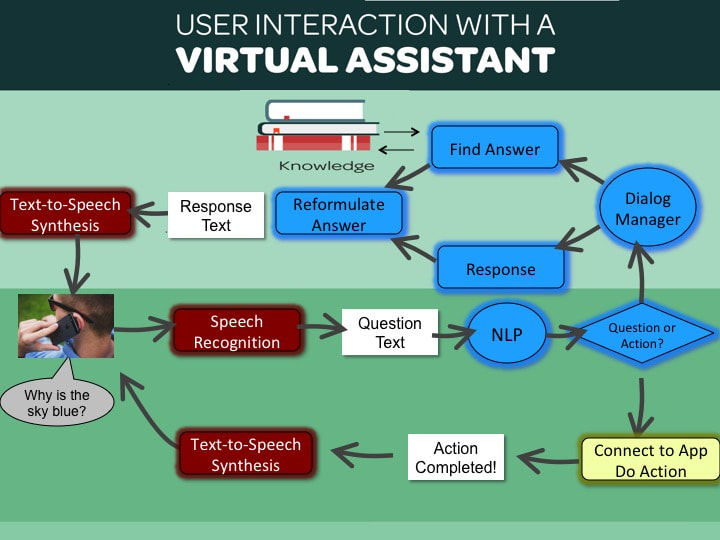

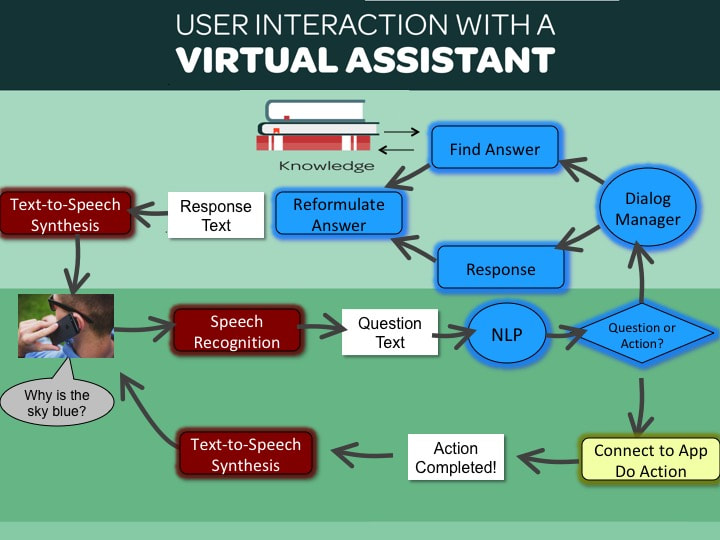

There is some truth to that but many critics have been looking at the trees instead of the forest. The forest to me is the strategy each company is pursuing. I have no internal information, not sure anyone does, and my feeling is that Apple feels that they are meeting their objectives of selling more products, albeit recognizing that the market is different than 2011 and they have to operate more aggressively. The next few posts give my personal view on some of the digital assistant platforms and the reasons why they will be around years from now. Without stealing my own thunder, I would say that there will be a few of them around, and they will get along just fine. While there are some early leaders this will be a long battle. To compare the different platforms I will use the 10-point comparison list from the earlier post “Are Chatbots, Virtual Assistants really that smart?” Chatbots, digital assistants, virtual assistants are all based on a conversational user interface, and they are not all equally smart or conversational. Traditionally chatbots have been associated with conversational systems with a chat-like or messenger-type interface, while personal digital assistants in your device can translate speech to text, and do text-to-speech synthesis to read back responses to the user, and are more 'personal' in the sense that can help you do things more quickly, for instance, sending a text or setting a reminder in your calendar. Virtual Digital Assistant or Virtual Assistant is just a generic term for both. Virtual Assistants can differ in many of the steps in the following diagram, which depicts a data-flow that occurs after a user utters a question to the moment they get an answer. For instance, some Virtual Assistants are just Q&A chatbots and have a very limited dialog manager component, others may lack speech recognition or understand more than just the English language. In analyzing virtual assistants, these are some of the things they may or may not do:

I will be comparing some of the commercial virtual assistants in future posts and will use the above 10-point comparison list to highlight features or lack thereof. Presentation or slideshow software allows anyone to illustrate concepts and topic overviews very quickly and simply. Microsoft® PowerPoint, Apple® Keynote, and Google® Slides are some of these popular software apps, with PowerPoint being the most common data format supported by all.

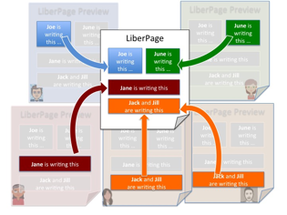

What if you want to engage your audience in virtual whiteboard sessions or want to poll them on particular topics or take their pulse on how well they are grasping your presentation? For example, trainers, instructors, and teachers need just that, and while they use presentation software to deliver learning content, its effectiveness is limited for its inability to engage the audience with interactive canvas slides or polls. These users have to resort to separate applications causing a non-ideal user experience for both trainers and trainees. The good news is that Microsoft and Google have opened up their presentation software, online version, to developers to create integrated systems that improve enormously that user experience. We created such a system at LiberCloud, where users could not just use Microsoft PowerPoint Online to author their presentation, we allowed them to integrate polls, surveys, and tests, as well as virtual whiteboards, in their online presentation. Please see this page for an overview of what and how we did it. The above diagram depicts a typical data-flow that occurs after a user utters a question to the moment they get an answer. Text or messenger-based chatbots work similarly but lack the speech recognition and synthesis steps.

A key differentiation among virtual assistants conveyed in the diagram is the differentiation between question and answer assistants, whether general questions or specific to a domain, and action assistants, those that will book an appointment in your calendar or book a hotel room for you, hence the name of personal digital assistants. My interest in Virtual Assistants stems from the fact that I spent 12 years at NoHold, a small company in Silicon Valley providing a virtual assistant platform for businesses to build custom self-service virtual assistant applications.

Virtual assistants have been used mostly and most successfully for self-service customer support use cases to help consumers help themselves, and in the process reduce support costs, improve customer satisfaction. Most of these services have a very focused approach, i.e., targeted at specific domains of knowledge and with the same overall objective of knowledge management systems and search engines: help users find relevant answers to their issues or questions easily and quickly. Most of these systems use some form of AI to let users express their questions in natural language and guide users find relevant answers, specifically, the areas of AI known as Expert Systems and Natural Language Processing. The approach and sophistication of the NLP vary substantially but most typically based on statistical analysis. These assistants are generally referred to as rule-based systems, because of the steps of having to manually create and maintain the rules on how to browse/navigate the corpus of domain knowledge. These systems have been around for many years, and they will still be around for many more as they bring real value, as in better productivity to both consumers and businesses. A new wave of virtual assistants has emerged recently with the advent of smartphones, the personal digital assistants, e.g., Siri and Alexa. They are trying to solve the same general problem of virtual assistants, but differently. One thing in particular that they do differently is that they “do” things for you, as in booking an appointment in your calendar, setting an alert, connecting to your smart home devices, e.g., turning light on or off, or make a reservation to a restaurant, etc., you get the idea. Users interact, with the personal digital assistants primarily through voice, through the smartphone for Siri or smart speaker for Alexa. So, for the digital personal assistant to set an alert, for example, they need to have a connection built with your reminder app, or with your travel booking app. The virtual assistant application provides an API and integration toolkit for these apps to integrate with. For Apple, so far, these APIs have been mostly for internal use, most likely to change, while Amazon is pushing these API to external developers. Another difference with personal digital assistants is that they also attempt to give answers to any question you may have, but they do this poorly because that's a tall order and, while performing the actions is rather 'simple', once you match the question to the predefined intents, answering questions of any kind and be smart is difficult. Apple’s Siri leverages a third party vast knowledge base to do this, Wolfram Alpha from Wolfram Research, while Alexa supports only specific categories of trivia questions today. Where they fall short is in understanding the natural language questions, and therefore the answers are not helpful. To be said, that the speech recognition aspect works remarkably well, at least for Siri, it’s the handoff and processing and understanding of the actual question text that is weak. NLP is an area that is improving by leaps and bounds thanks to a new breed of algorithms based on Machine Learning and Deep Learning. Both Alexa and Siri NLP appears to be based on these ML models, and I am sure they will improve over time. ML-based NLP attempts to solve one of the inefficiencies of rule-based systems as they don't require the continuous manual effort by Knowledge Specialists to improve the system. With an ML-based NLP, once you have the model built and deployed, it's "supposed" to be easier and faster to maintain. However, getting the right ML-model built is not easy either, and it requires lots of data, in most cases curated data that needs to be prepared and 'massaged' to be useful. This work is done not by Knowledge Specialists, but rather by Data Scientists. If an ML-model does not work well, it takes much longer to pinpoint where the problem is and requires highly skilled Data Scientists to do that. For a rule-based system, typically, it's much easier to find out why the system gave the wrong answer and take corrective actions rather quickly. So, keep this in mind before you invest in Virtual Assistants; this is a critical aspect that you need to consider. It’s a fascinating world, and I will post here my thoughts from time to time on new trends, improvements in all aspects of virtual assistants, personal digital assistants, search, or cognitive search. LiberCloud offers content management and collaboration services for content authors, educators, instructors as well as active learners. The SaaS platform allows individuals to sign-up as well as it gives the ability to educational institutions and businesses to create dedicated instances and private communities for sharing or collaborating on content.

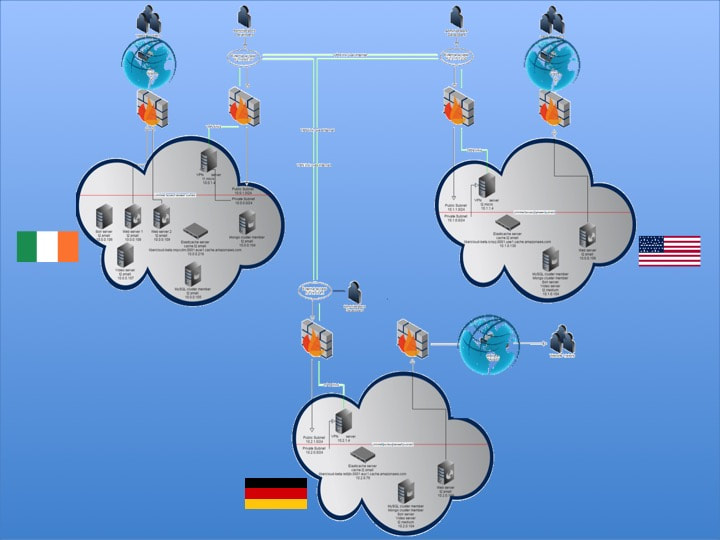

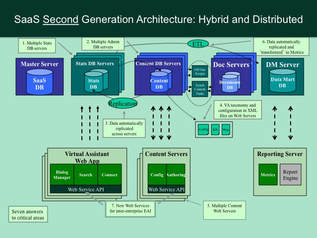

The multi-tenant SaaS platform initially developed on the LAMP stack, was being expanded with a MongoDB NoSQL cluster, Node.js component and Socket.io for asynchronous notifications, and an Ejabberd based real-time XMPP server for group discussion rooms and live-chat. The content management services support multi-media assets as well as interactive assets such as virtual whiteboards, real-time polls and surveys, and assessments. LiberCloud launched its beta services to the public in 2014 and announced the general availability at the international Bett trade-show in London in January 2015. LiberCloud service was targeting international businesses or startups with a distributed workforce, clients, and user base. Therefore, it required designing and deploying the services in a distributed fashion, such that content originated in one region is available in others, and participants in real-time virtual discussions, polls, and group chats could be in any region. LiberCloud intent to offer its services near or in the proximity of the organizations and users we serve required a distributed architecture spread across multiple geographical regions. In the age where many talent managers, educators, philosophers, and trainers have been advocating the adoption of flipped classroom training, blended learning techniques to improve assimilation of content. The main obstacles in these efforts are two-fold:

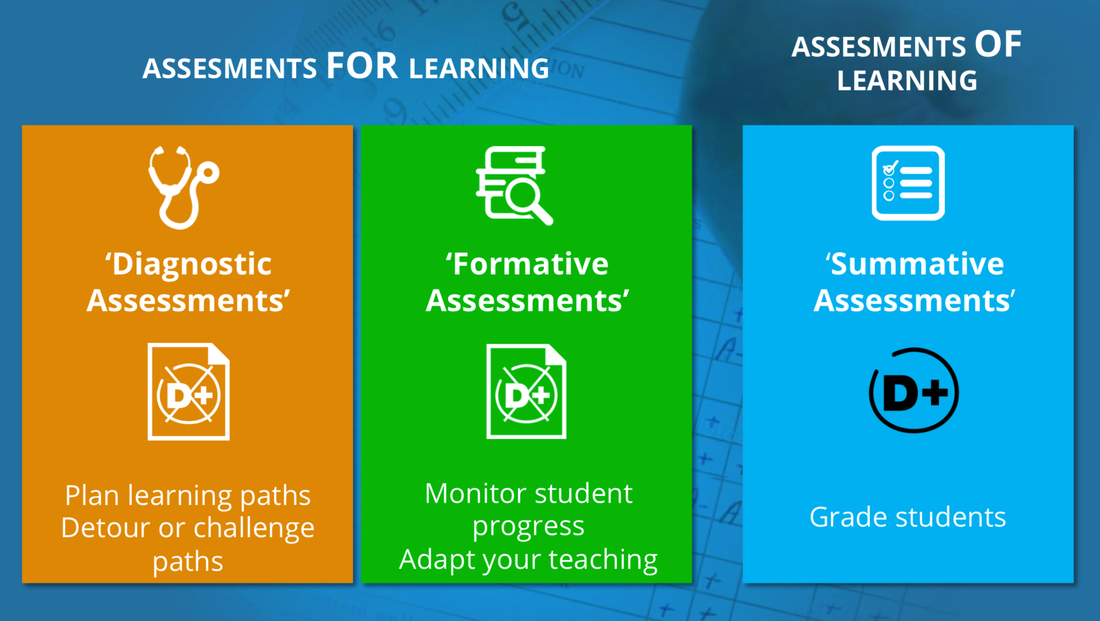

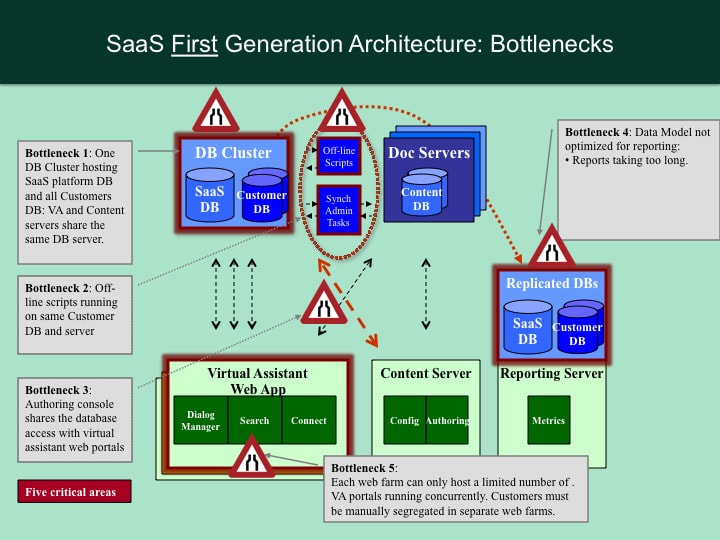

After an overview on how assessments policies have evolved, the white paper describes the facets of a new cohesive learning and assessment system to help educators implement differentiated instruction with assessments for learning, not just for grading, ultimately to achieve the goal of making sure trainees actually learn what is being taught. I introduced these concepts as guest speaker at the Assessment Tomorrow conference in London City in March 2016. A few years back I wrote a white paper documenting the effort and thoughts that went into building a first generation SaaS platform and subsequent upgrade. While new technologies have been introduced, SaaS is now widely accepted by most companies to deliver their services, and building a SaaS-based application has become increasingly easier, there are some aspects of our original effort that were unique. We developed our first SaaS platform because the traditional web application architecture was inadequate to meet our business objectives. We were in uncharted territory, and customers demanded that we would not mingle their data with other customers' data. So, a common thread in our releases was the isolation of customer data. Many customers today may require the same, for regulatory or privacy concerns, and I thought that by sharing the thoughts that went into designing the first generation SaaS platform and subsequent upgrade would benefit your efforts as well. Our first generation SaaS platform needed some basic components to improve the provisioning and configurability of the application. We needed:

After we launched the first generation platform, over the course of several months we had other important issues to tackle to ensure scalability of platform and scalability of the business processes revolving the operation of the entire SaaS platform. The most significant improvement being the decentralization of the database and ability to use Machine Learning:

Collaboration platforms have existed for years in two primary areas: to collaborate on content and to share presentations. The first is primarily used in content management systems, general purpose ones and those specific to learning, while the second is a function of web conferencing services. The two services serve the same audiences but rarely have intersected from a functional standpoint.

Here we want to make the case that the two could and should intersect, in particular in a modern working and learning environment where content is created by brainstorming, discussions, and assessments. The proposed integrated environment is a lightweight, cloud platform, based on the concepts of real-time collaboration on content and the “Virtual Whiteboard” that provides more flexibility, unleashes the attachment to any particular physical device, and is easy for anyone to use. This white paper provides an overview of its features. Today, we are showcasing LiberCloud at Bett London and announcing its official launch. I have been thinking of starting a blog detailing thoughts and events on technologies that have or will have an impact on our business and lives. This first post is about the ideas behind LiberCloud and the problems we are trying to solve.

The demands for reducing workforce skills gap and improve content assimilation are increasing and companies are faced with outdated processes and technologies to meet this challenge. Talent managers, trainers, and content developers have had to settle for narrow and outdated solutions to improve workforce skills, or for onboarding programs. For instance, instructor-led programs that did not scale, or self-paced programs that were not engaging. A new content platform is needed that combine the best of both methods. |

Categories

All

Archives

January 2019

|

RSS Feed

RSS Feed